Dev at work: Exceptions in production and database schema inconsistencies

About this blog post series:

A few days ago, I asked on Twitter if people would be interested in reading about what I do during my workday as a programmer. It was an idea I had thought about for a while, as it is something I wanted to know when I was studying- and even today I’m curios what people do at work regardless of profession. When I studied dietetics, I had no idea how working as a clinical dietitian would be, and after many years of studying I was disappointed to find out that it wasn’t at all what I was hoping for. And as you maybe know, or can guess, I decided to make a career switch and started learning programming. To avoid doing the same ‘mistake again’ I made sure to do practical training within the first month. I asked my teacher if he knew a programmer or company that wouldn’t mind having me there on Fridays (we didn’t have school on Fridays) and that is also how I landed my first job :) They decided to keep me during the summer vacation. Nonetheless, I wont blabber on for too long. Hope you enjoy my diary blog posts of my work days, there should be one on most days,- but of varying lengths.

Here is my first post:

What a day it has been! It’s bloody warm outside and Jonas has been running the aircon at Swiss alps level. I might be able to do snow angels in the office soon!

[caption id=“attachment_36283” align=“aligncenter” width=“677”]

Standup

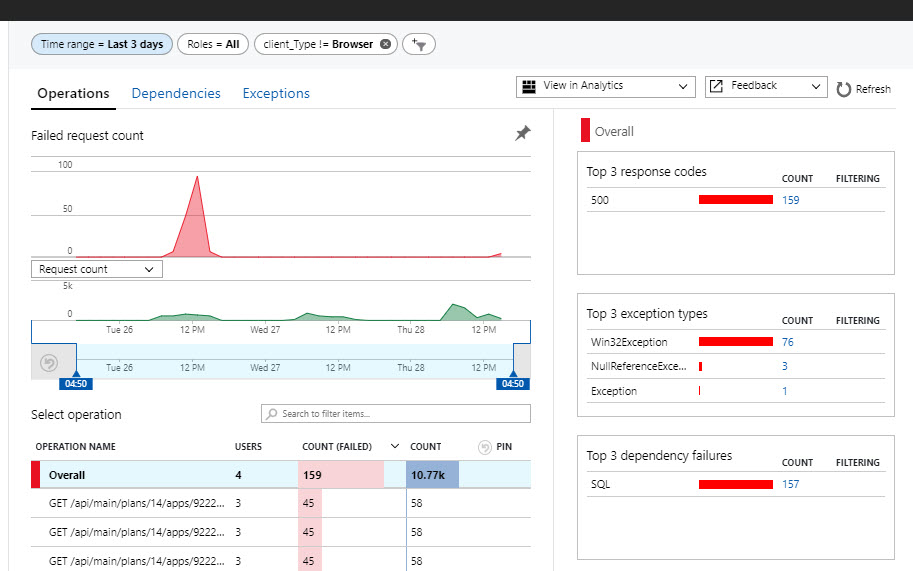

I rolled in right before 10 AM as I do on most days, right before our daily standup (short meeting where everybody shares what they are working on and status on that item). I discussed with one of the product owner how much work implementing OAuth2 for third party providers would be- the day before I has split up the story into several items and given them a quick estimate so he could decide if we should do this in the next sprint. We also talked about some exceptions we had seen in our log, and some support tickets that one of our tenants had submitted in regards to a lot of slow calls and problems around 2 PM two days prior. Usually we try to stick to the stories on the board during our daily standup, but now and then we get some minor emergencies such as the ones we had today. Since I’m the one that set up our unified logging, using Azure Application Insights, and the only one somewhat fluent on the query language I offered to investigate the two issues so we would know if we had to prioritize these tickets in the next sprint.

Here you can see spike in failed requests two days ago

Detective work

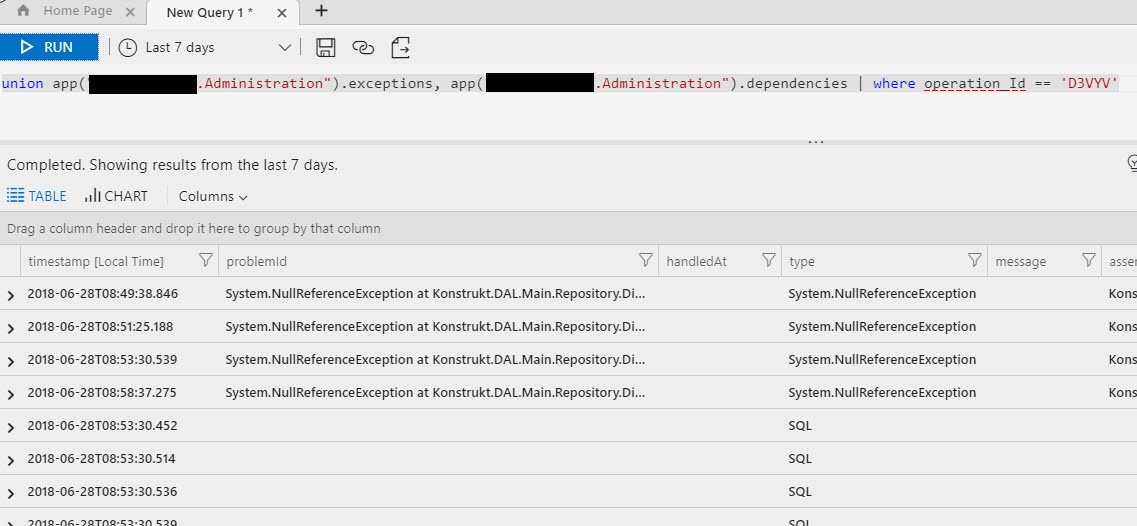

After the standup I did some investigation and traced down the exceptions. I wrote a query that grouped the exceptions by type and service and quickly identified that it was our Main and Admin service that had issues, the former SQL exceptions (timeouts) and the latter were embarrassingly enough null reference exceptions. I haven’t seen those in a long time- which meant that this was something new. By looking at the exceptions, and getting the operation id I could find all the dependency calls that were made prior to the exception, and combined with the logged stacktrace it became evident that the issue was some missing settings for a new tenant.

This turned out to be a bigger deal than I first thought. Here is the issue:

We have a system settings table for each tenant with global values for their setup, each row is basically treated like a Key-Value pair. With time we have added new keys, but we haven’t been consistent with updating the tenant tables, and thus we’ve ended up with some tenants missing certain settings.

Do we even know which settings are required?

I only noticed this because we had a bunch of exceptions for a new tenant, and it turns out that the tenant didn’t have all the settings needed- that’s not good!

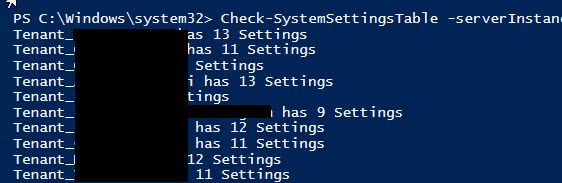

I wrote a PowerShell script that went through all our tenants and looked at the settings they had. Not one single tenant had the same number or combination of settings.

And the majority of the settings are mandatory. I shared my findings on Slack with my colleagues and discussed with the two product owners what we would do. I suggested we use my script to bring all the tenants up to date, as well as having a separate table in our system database (each tenant has their own database) with the settings and the default values- and we could use that table as a reference. As a part of our deployment steps I would write a script that would make sure that all the tenants had the settings needed (as we often add new settings) to avoid future problems. At this point we are still doing manual updates to the databases (semi-automated as I wrote a script that runs the update scripts on each tenant after creating a backup, but it has to be run manually), but we will fix that in the next sprint or so as database inconsistency is eating up a lot of our development time.

I fixed the settings problem for the tenant and tested that everything worked by replaying the failed requests. And I could then move on to the next piece of detective work, which was trickier.

The many exceptions that was detected yesterday (but happened two days ago- we really need to enable our alerts again) were SQL timeout exceptions. I found the SQL queries that caused them but running them separately didn’t take more than a few seconds (complex queries). Some sort of cocktail effect must have caused the timeouts, but how?

It’s 7 PM and I’m heading home, and tomorrow I’ll share with you what the problems were, and how the detective work was done- I think you are going to like it!

On a side-note, dear student programmer- I hope you notice that we don’t code each day all day. Some days, if not most days, involve thinking, discussing, thinking some more, debugging and digging in logs and what not, or maybe tweaking the deployment pipeline.

Overall, it was a good day. But I’m ready to go home. It’s 27C outside, and I’m going to get an ice-cream and walk home (takes an hour) while listening to some programming podcasts.

Comments

One question: you mention making some of these changes adhoc to fix data, but do you deploy new database changes through Octopus or some pipeline? Always curious to see if DevOps has gotten to the database. This is a great post, and looking forward to seeing what other things you write about during other days. I think it's a great idea to expose people considering this profession to the daily workings of others.

Thank you :) I'm writing on another one, and plan on writing a 2-3 posts a week on the topic :)

I need to get round to learning some powershell, where is best to start?

I started by watching some Pluralsight videos on the topic- but to make it stick it's probably better to find some use cases and build on those and use tutorials and the documentation as a reference. So start with an introduction video to get the general idea, but make sure to also have a practical problem to solve. PowerShell is my go-to for automation, and I've written scripts for: Comparing database schemas (MSSQL) and creating a report that is emailed when certain alerts are triggered Scraping websites for data in parallel (I needed the data for some ML stuff) Quick and dirty load tests of our system Ping bot for our system Testing APIs Pulling out spam comments for my blog (MySQL), getting the IP addresses and adding them to the config so I can block them Installing stuff on remote VM's when I can't use Chocolatey Killing or restarting processes without a GUI Modifying the keyreg Adding users to groups, changing permissions etc Quickly setting up and tearing down VMs and a whole lot more :)

Last modified on 2018-06-28