(Not so) Stupid Question 321: Where does the Maintainability Index calculation come from?

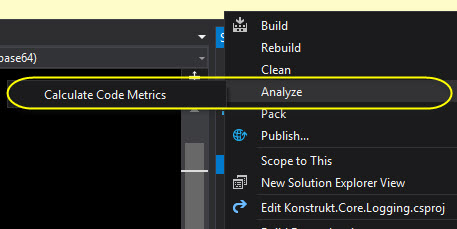

I don’t know if you’ve ever noticed, but in Visual Studio you can you can analyze a project and get a Maintainability Index score. The score, presented as a value between 0 and 100 in Visual Studio, is supposedly a way to calculate how maintainable a piece of code is. I say supposedly, has this the metric is heavily criticized (as all software metrics are).

[caption id=“attachment_37513” align=“aligncenter” width=“457”]

But today’s question is, where does this metric come from?

The formula was introduced by Oman and Hagemeister in 1991 in their paper ‘A Definition and Taxonomy for Software Maintainability’. It has since been changed a few times, and there are several versions out there.

It measures maintainability of the code by scoring cyclomatic complexity, Halstead volume and lines of code. Comment lines can also be used in the calculation- this is however often optional and is not used by Visual Studio. Visual Studio gives you a score between 0-100, with under 20 considered ideal and over 60 problematic.

The metric has been questioned by developers and non-developers alike, and there are numerous papers and articles discussing the value of the metric. Many of them have pointed out that other maintainability indicators are not taken into account such as naming, comments for documentation purpose, necessary complexity, how lambdas are resolved by the compiler and other factors.

My take on it, use it as a vague indicator (if you want to use it all), or to get the other metrics (can be exported as an excel sheet) such as lines of code and class coupling. I’ve personally used this before, during and after large refactorings, to possibly detect other problems, and to do a comparison.

Have you ever used this in a project? Would you consider using it?

Comments

Last modified on 2019-02-03